Mayastor is an OpenEBS project built in Rust utilising the modern NVMEoF system. There are very good tools for inspection and debugging, but this is still frequently seen as a concern. There are lots of acronyms and the documentation assumes a fair level of knowledge. Troubleshooting Ceph can be difficult if you do not understand its architecture. It relies heavily on CPUs and massive parallelisation to provide good cluster performance, so if you don’t have much of those dedicated to Ceph, it is not going to be well-optimised for you.Īlso, if your cluster is small, just running Ceph may eat up a significant amount of the resources you have available. So if Ceph is so great, why not use it for everything?Ĭeph can be rather slow for small clusters. With the help of Rook, the vast majority of the complexity of Ceph is hidden away by a very robust operator, allowing you to control almost everything about your Ceph cluster from fairly simple Kubernetes CRDs. It comes bundled with RadosGW, an S3-compatible object store CephFS, a NFS-like clustered filesystem and RBD, a block storage system. It scales better than almost any other system out there, open source or proprietary, being able to easily add and remove storage over time with no downtime, safely and easily. It is big, has a lot of pieces, and will do just about anything. Rook/CephĬeph is the grandfather of open source storage clusters. If your storage needs are small enough to not need Ceph, use Mayastor. While it may seem like a convenience at first, there are all manner of locking, performance, change control, and reliability concerns inherent in any mount-many situation, so we strongly recommend you avoid this method. NFS is pervasive because it is old and easy, not because it is a good idea. Please note that most people should never use mount-many semantics. The down side of Ceph is that there are a lot of moving parts. If you need vast amounts of storage composed of more than a dozen or so disks, we recommend you use Rook to manage Ceph.Īlso, if you need both mount-once and mount-many capabilities, Ceph is your answer.Ĭeph also bundles in an S3-compatible object store. Running a storage cluster can be a very good choice when managing your own storage, and there are two projects we recommend, depending on your situation. Redundancy, scaling capabilities, reliability, speed, maintenance load, and ease of use are all factors you must consider when managing your own storage. Sidero Labs recommends having separate disks (apart from the Talos install disk) to be used for storage. If you are running on a major public cloud, use their block storage. There are a lot of options out there, and it can be fairly bewildering.įor Talos, we try to limit the options somewhat to make the decision-making easier. This frequently sends users down a rabbit hole of researching all the various options for storage backends for their platform, for Kubernetes, and for their workloads. However, unless you are running in a major public cloud, that API may not be hooked up to anything.

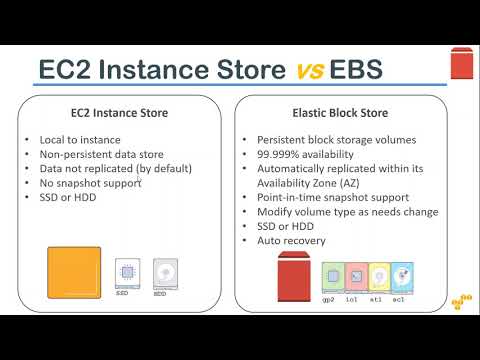

In Kubernetes, using storage in the right way is well-facilitated by the API. Setting up storage for a Kubernetes cluster How to enable workers on your control plane nodes.For safety the script will not write any data to any /dev/ device if it has an existing partition table. This script will format the instance store on boot and enable swap, by default it formats /dev/xvdb which shouldn’t contain data on boot as ephemeral storage (what the instance store sites on top of) is wiped on shutdown. You could use this script with a second EBS volume on the T1 and T2 instances for swap to give that buffer for the small amount of memory provided (Make sure you use the EBS SSD backed storage otherwise you will be charged per million I/O’s). T1 and T2 instances don’t have instance stores or special Swap instance stores. Special swap instance stores are provided with m1.small and m1.medium instances (usually /dev/xvdb3) and mounted automatically (Unless you upgrade from a micro but that is another issue I’ll cover in another post). I created this script so that I could utilise the free instance store provided with my EC2 instance as swap.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed